Capability Densing in LLM Systems

What does it mean for you and your business?

There is an important paper, which is about smaller language models. It has a few implications that are also quite important. It’s about the increase in capability per parameter in large language models over time, called Densing. Densing makes smaller models more competitive and CPU deployment more realistic even for tasks that depend on generative capabilities such as tasks with long context or complex reasoning.

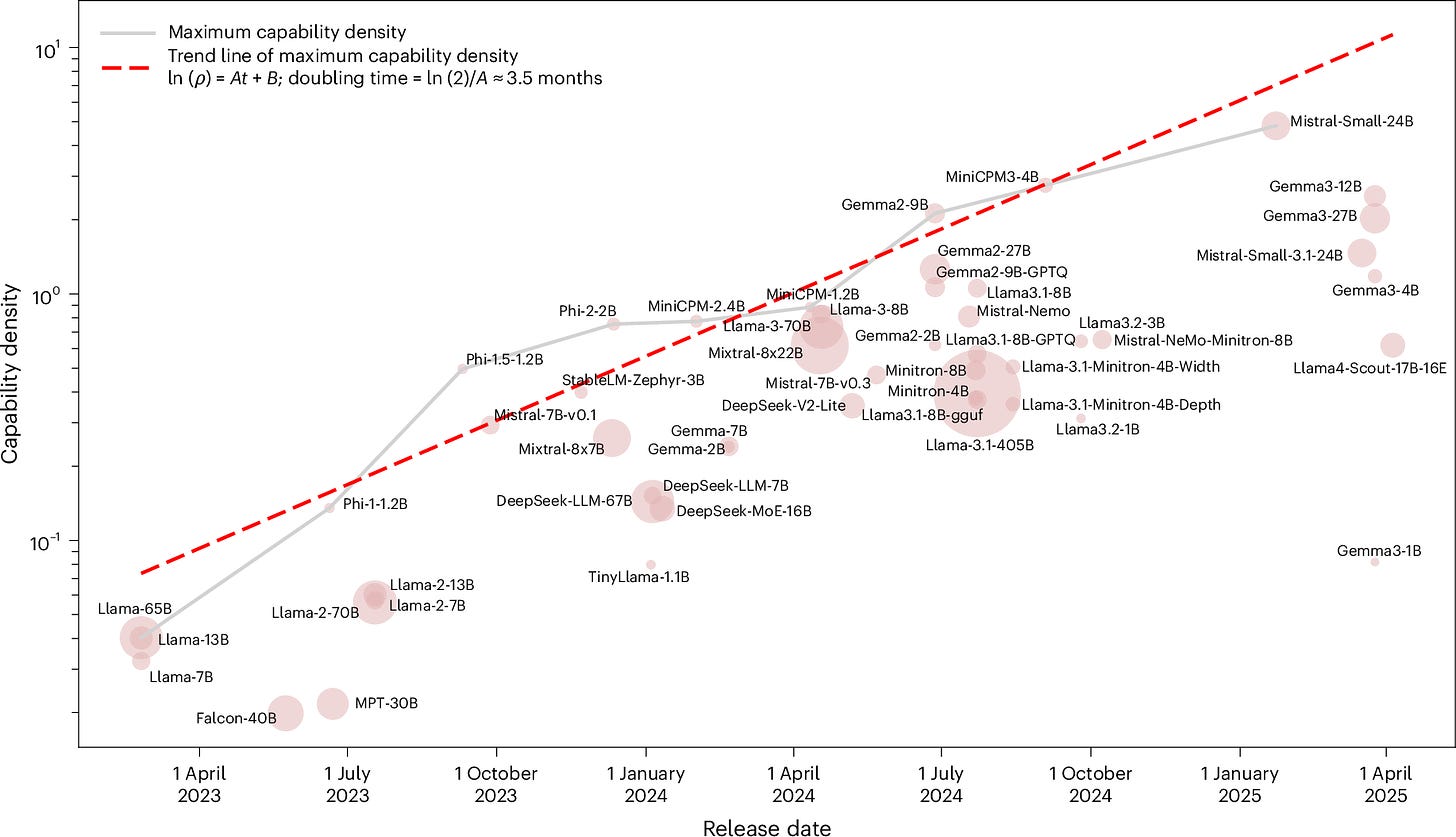

This comes from an analysis in “Densing law of LLMs” (2025) by Chaojun Xiao and others. The core quantity introduced in the paper is capability density, defined relative to a baseline scaling curve. The authors analysed dozens of open models released over a recent multi year window and evaluated them on standard benchmarks such as reasoning, coding, and multitask language understanding.

For a given benchmark score, the authors calculated the effective number of parameters a reference model would need to match that score, and divide it by the model’s actual parameter count.

Plotting the best capability density achieved at each point in time yields a clear upward trend. The trend remains visible under alternative benchmark choices and under attempts to control for data contamination. Here’s the main plot from the article (licensed under Creative Commons Attribution 4.0) — please note the log scale on the y-axis:

The authors found that among open models, the maximum observed capability density has increased roughly exponentially over time. In the data analysed, the frontier density doubles on the order of a few months. The paper does not claim universality as a fundamental law or permanence.

Let’s look at the reasons behind this phenomenon.

Why densing has increased

The paper attributes most of the observed gains to reductions in inefficiency rather than new task capabilities. Early models underused parameters due to suboptimal training setups. Improvements in data curation, instruction tuning, optimisation, architecture details, and synthetic data generation allowed newer models to achieve similar benchmark performance with fewer parameters. Densing, in this sense, measures how much wasted capacity has been removed.

How long the trend may hold

The authors argued that exponential improvement in density should not be expected indefinitely. Capability density is bounded by the scaling frontier itself, by benchmark saturation, and by limits on data quality and training efficiency. As models approach the upper envelope of what current architectures and data allow, gains should slow. The most likely outcome is a transition from a steep exponential regime to a slower one, followed by a plateau unless new training paradigms or architectures shift the frontier.

Implications

Densing helps explain why smaller, newer models often match or exceed much larger older ones. It also suggests that cost reductions and deployment gains can continue even if parameter counts stabilise. At the same time, it cautions against extrapolating short term exponential trends too far into the future. Efficiency gains have limits, and long term progress likely depends on changes that move the scaling frontier itself rather than further compression towards it.

However, there are a few important implications. In particular, we can expect to see smaller models match larger models, making low-latency, CPU-based inference realistic for many tasks. This will mean massive cost savings and deployment flexibility.

We are already seeing 30B parameter models (say Kimi K2.5 or similar) as powerful as models with an extremely high parameter count (>100B) such as GPT-5. In a few months, you can expect that you can replace this 30B-parameter model with a 7B–13B model with similar performance for many tasks, especially single-turn inference, code generation, or reasoning benchmarks.

As for model choice, you can focus on model tuning, instruction / data alignment, and efficient inference libraries instead of scaling parameters blindly.

Chelsea AI specialises in bespoke systems including CPU-only and on-premise systems.